The Growing Importance of AI Governance

It’s no secret that AI is increasingly being used in companies’ business operations across industries. McKinsey’s State of AI in 2025 report shows that 88% of organizations surveyed have adopted AI in at least one business function. However, this widespread adoption brings equally widespread concerns. Organizations are grappling with algorithmic bias, data misuse, model vulnerabilities, and a lack of accountability. High-profile failures, such as biased algorithms and unsafe autonomous decisions, have made it clear that innovation without governance poses significant risks.

In this new reality, success is no longer defined by how quickly organizations deploy AI, but by how responsibly they manage it. Trust and governance, not speed, have become the true differentiators. This shift places cybersecurity professionals at the center of AI transformation. No longer confined to protecting networks and endpoints, they are now expected to safeguard data integrity, model reliability, and ethical AI use. The question is, “Are they ready?”

Why Trust is the Foundation of AI Adoption

Trust is not a soft concept. It is a measurable business imperative. According to PwC, 85% of consumers say they will not engage with a company if they have concerns about its data practices.

In the context of AI, trust determines adoption, scalability, and long-term viability. When AI systems operate without proper governance, the consequences can be severe:

- Financial losses from flawed automated decisions

- Reputational damage due to biased or unethical outcomes

- Regulatory penalties for non-compliance with frameworks like GDPR or emerging AI laws

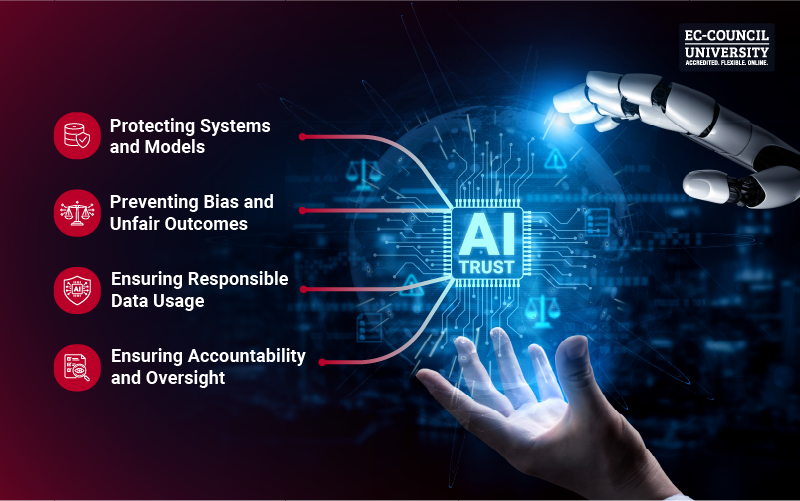

AI trust sits at the intersection of:

- Cybersecurity: Protecting systems and models

- Privacy: Ensuring responsible data usage

- Ethics: Preventing bias and unfair outcomes

- Governance: Ensuring accountability and oversight

Cybersecurity professionals are uniquely positioned to unify these domains, making them essential architects of AI trust.

The New Threat Landscape Introduced by AI

Apart from being a useful tool, AI can also serve as a formidable attack surface. Threat actors are leveraging AI to scale cyberattacks, while AI systems themselves introduce new vulnerabilities.

Key AI-Driven Threats:

- Adversarial Attacks: Manipulating inputs to deceive AI models

- Data Poisoning: Injecting malicious data during training

- Model Theft: Extracting proprietary models via API abuse

- Deepfakes and Synthetic Media: Increasing sophistication of social engineering

- Automated Cyberattacks: AI-driven malware and phishing campaigns

The Data Behind the Risk:

- A 2025 survey by Gartner found that 62% of organizations experienced an attack involving AI-powered social engineering or automated processes.

- The number of deepfake attacks detected across all industries worldwide grew by 10x between 2022 and 2023, per Sumsub.

- IBM’s Cost of a Data Breach report states that the average cost of a data breach reached $4.4 million in 2025, with AI-related risks contributing to rising complexity.

These trends underscore the reality that AI amplifies both capability and risk.

What AI Governance Means for Cybersecurity Professionals

AI governance is often misunderstood as a compliance checklist. In reality, it is a continuous, lifecycle-driven discipline.

Beyond Policies: Operational Governance

Effective AI governance includes:

- Defining clear ownership across teams

- Embedding security into every stage of the AI lifecycle

- Establishing monitoring, auditing, and incident response mechanisms

Security Across the AI Lifecycle:

- Data Layer: Data integrity, privacy, and provenance

- Model Layer: Robustness, fairness, and explainability

- Deployment Layer: Secure APIs, access control, and monitoring

Core Principles:

- Accountability: Who is responsible when AI fails?

- Transparency: Can decisions be explained and audited?

- Auditability: Are there logs, controls, and traceability?

AI governance must also align with broader enterprise risk management (ERM) strategies to ensure AI risks are treated with the same rigor as financial or operational risks.

“The future of cybersecurity leadership will be about striking the right balance — trusting AI while maintaining human oversight.”

- Timothy Youngblood (CISO, Astrix Security | Former CISO, McDonald’s)

Are Cybersecurity Professionals Ready for AI Governance?

The short answer is “not yet.”

It’s concerning enough that ISC² reports a global cybersecurity workforce gap of over 4 million professionals, making it difficult to meet even current security demands. Now add AI governance to the equation, and it’s obvious a lot needs to be done.

Many cybersecurity professionals lack formal training in AI and machine learning, are unfamiliar with model-specific vulnerabilities, and have limited exposure to ethical and regulatory considerations. However, the scope for career growth in this area is immense, as they already possess:

- Risk management expertise

- Threat modeling capabilities

- Security architecture experience

With targeted upskilling, they can transform into AI governance leaders.

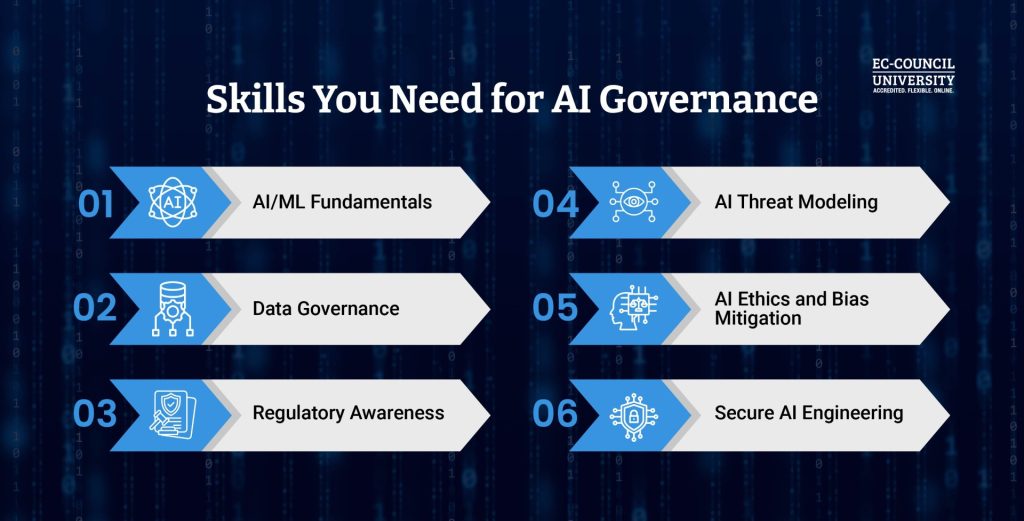

Core Skills Cybersecurity Professionals Need for AI Governance

To remain relevant and lead, professionals must expand their skillset beyond traditional cybersecurity. With AI in the picture, essential skill areas have evolved into:

- AI/ML Fundamentals: Understanding how models are trained and deployed

- AI Threat Modeling: Identifying risks unique to AI systems

- Data Governance: Managing data quality, lineage, and compliance

- AI Ethics and Bias Mitigation: Ensuring fairness and accountability

- Regulatory Awareness: Knowledge of evolving AI laws and standards

- Secure AI Engineering: Embedding security into AI pipelines

How ECCU Prepares Professionals to Lead AI Governance

At EC-Council University (ECCU), developing leadership capabilities is equally prioritized as gaining technical cybersecurity skills and knowledge. ECCU’s online degree programs incorporate:

- AI security strategies and governance frameworks

- Risk management aligned with enterprise objectives

- Real-world scenarios involving AI threats and compliance challenges

Programs like the Master of Science in Cyber Security and the Master of Business Administration (Cybersecurity) now include valuable AI certifications as part of the coursework. This helps bridge the gap between technical execution and strategic decision-making, enabling graduates to govern AI systems responsibly.

The Role of Education in Building Trustworthy AI

AI adoption is accelerating faster than workforce readiness. According to the World Economic Forum, 50% of all employees will need reskilling by 2025 due to technological shifts.

Why Education Is Critical:

- AI risks evolve faster than traditional security threats

- Governance requires cross-disciplinary knowledge

- Leadership roles demand both technical and strategic fluency

EC-Council University’s cybersecurity education connects the dots between:

- Advanced cybersecurity practices

- AI-specific risk frameworks

- Business and governance strategy

ECCU’s approach focuses on developing future-ready cybersecurity leaders, not just practitioners.

The Future of AI Trust, Governance, and Cybersecurity

AI will continue to redefine industries, but trust will determine its trajectory. The world is moving toward:

- Regulated AI ecosystems

- Standardized governance frameworks

- Greater accountability for AI outcomes

Cybersecurity will serve as the backbone of this transformation.

Organizations that invest in AI governance today will lead tomorrow. Those who hesitate will face escalating technical, legal, and reputational risks.

Gain AI Governance Expertise at ECCU

Cybersecurity professionals must evolve from digital defenders to governors of intelligent systems. This requires new skills, new mindsets, and a commitment to continuous learning.

The urgency is clear:

- AI risks are growing

- Governance expectations are rising

- The talent gap is widening

EC-Council University (ECCU) is actively addressing these realities by equipping professionals to lead with integrity, accountability, and strategic vision. To know more about AI governance education at ECCU:

Frequently Asked Questions

Trust ensures that AI systems are reliable, ethical, and secure. Without trust, organizations risk reputational damage, regulatory penalties, and reduced user adoption.

Key risks include adversarial attacks, data poisoning, model theft, deepfakes, and AI-powered phishing campaigns.

AI governance involves managing AI risks through policies, controls, and lifecycle security practices to ensure accountability, transparency, and compliance.

Yes. Understanding AI/ML concepts, threats, and governance frameworks is essential for securing modern digital environments.

By upskilling in AI security, AI governance and ethics, and risk management, through structured education programs like those offered by ECCU.